When RAG Started Thinking for Itself: The Story of Agentic RAG

Tech Enthusiast | 19+ Years in IT | Security, Coding, Trends With over 19 years of experience in the ever-evolving world of Information Technology, I’m passionate about staying ahead of the curve. From mastering secure coding practices to exploring the latest trends in AI, cloud computing, and cybersecurity, my mission is to share valuable insights, practical tips, and the latest industry updates. Whether it's about writing cleaner, more efficient code or enhancing security protocols, I aim to empower developers and IT professionals to excel in their careers while keeping pace with the rapidly changing tech landscape.

1. The Beginning: When AI Knew, But Didn’t Understand

A few years ago, when the first wave of Generative AI models arrived, the world was amazed.

Chatbots could summarize books, answer questions, and write poetry — all within seconds.

But there was a quiet limitation behind all that brilliance:

they didn’t actually know what was happening beyond their training data.

Imagine asking a brilliant student a question about a new medical study —

they could sound confident, but if they hadn’t read that specific study, their answer was just… guesswork.

That’s where RAG — Retrieval-Augmented Generation — stepped in.

It gave AI access to external knowledge, allowing it to retrieve real facts before generating answers.

Suddenly, the student (the AI) could open the right book before speaking.

The world of enterprise AI, healthcare, and research rejoiced.

Finally, models could back their words with data.

2. The Problem: When Knowledge Isn’t Enough

But soon, a new problem appeared.

RAG could fetch data, yes — but it couldn’t reason about it.

It retrieved what it was told, not what it should have looked for.

If you asked it a complex question like,

“What’s the most effective treatment for diabetes patients with kidney complications in the last two years?”

…it would retrieve medical data — but maybe from the wrong year, or without verifying context.

It lacked judgment.

It couldn’t plan.

It couldn’t verify.

It was like a librarian who brings you ten books, but doesn’t know which one holds the answer.

Enter the next chapter of this story.

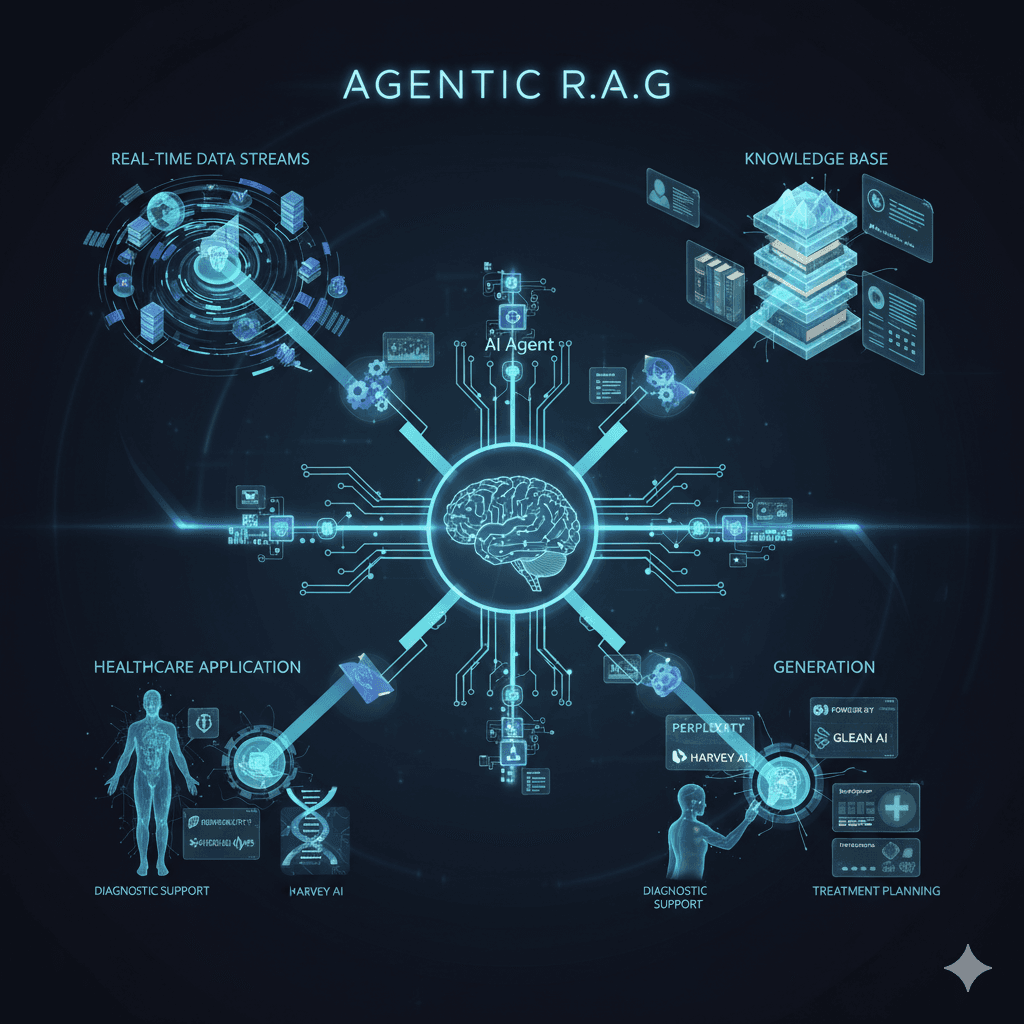

3. The Turning Point: When AI Became Agentic

Somewhere in a lab — maybe at OpenAI, maybe at Perplexity, maybe at Harvey AI —

researchers began asking a different question:

“What if retrieval itself could think?”

That’s when Agentic RAG was born.

Instead of a simple pipeline — retrieve, then generate —

the model now had an intelligent agent sitting in the middle.

This agent could reason, plan, and act autonomously.

When you asked it a question, it didn’t just look once.

It thought, “I need to verify this,” or “Maybe I should search another source.”

It started:

Decomposing the query into smaller parts.

Fetching data from multiple databases or APIs.

Cross-verifying results.

Synthesizing them into a coherent, accurate narrative.

In essence, the librarian became a research assistant — curious, analytical, and proactive.

4. The Real-World Impact: From Desks to Diagnosis Rooms

Soon, this new way of reasoning spread across industries.

In Healthcare:

Hospitals began using Agentic RAG systems to analyze real-time patient data.

Instead of retrieving a list of potential treatments, the system would reason through each case —

filtering by age, medical history, and recent clinical studies — before suggesting the most relevant information.

Doctors didn’t just get data;

they got insights.

In Legal Firms:

Tools like Harvey AI turned complex legal document reviews into intelligent conversations.

Lawyers could ask,

“What precedents strengthen this case based on recent judgments?”

and the AI would search, reason, and explain its logic —

something traditional RAG could never do.

In Enterprises:

Platforms like Glean AI and Perplexity AI began helping teams find not just files,

but meaning — connecting scattered knowledge across emails, documents, and APIs,

and explaining why those insights mattered.

Agentic RAG wasn’t just fetching data.

It was connecting the dots.

5. The Architecture Behind the Magic

Behind the scenes, Agentic RAG looks like a symphony in motion:

User asks a question →

The agent interprets the intent and decides what information is missing.Agent plans the path →

It might say, “Let’s first search the database, then verify through the web API.”Multi-step retrieval →

It collects data iteratively, refining its search after each result.Reasoning layer →

The agent validates, compares, and filters irrelevant data.Generation layer →

Finally, the model crafts a clear, verified, and contextual response.

Each answer becomes a mini research journey, not just a static output.

6. Why This Matters: The Human Connection

At its core, Agentic RAG brings AI closer to human cognition.

Humans don’t answer instantly — we think, search, verify, and conclude.

Now, AI can too.

This evolution is more than technical — it’s philosophical.

It moves AI from being a tool that retrieves to a partner that reasons.

And that shift unlocks a new world of possibilities:

Doctors getting real-time, contextual support.

Lawyers navigating complex cases with confidence.

Analysts discovering patterns no dashboard could show.

7. The Future: When Machines Become Thought Partners

We’re entering a future where Agentic RAG systems will no longer just sit behind chatbots —

they’ll power enterprise copilots, research assistants, and decision engines.

AI will not only know — it will understand.

It will not only retrieve — it will reason.

The line between machine knowledge and human insight will begin to blur —

and together, they’ll redefine how we discover truth.

Epilogue

So, the next time you ask an AI a question and it gives you a thoughtful, well-verified answer —

remember:

that’s not just a chatbot at work.

That’s Agentic RAG — the mind behind the machine, reasoning in real time,

helping us move from information overload to intelligent understanding.