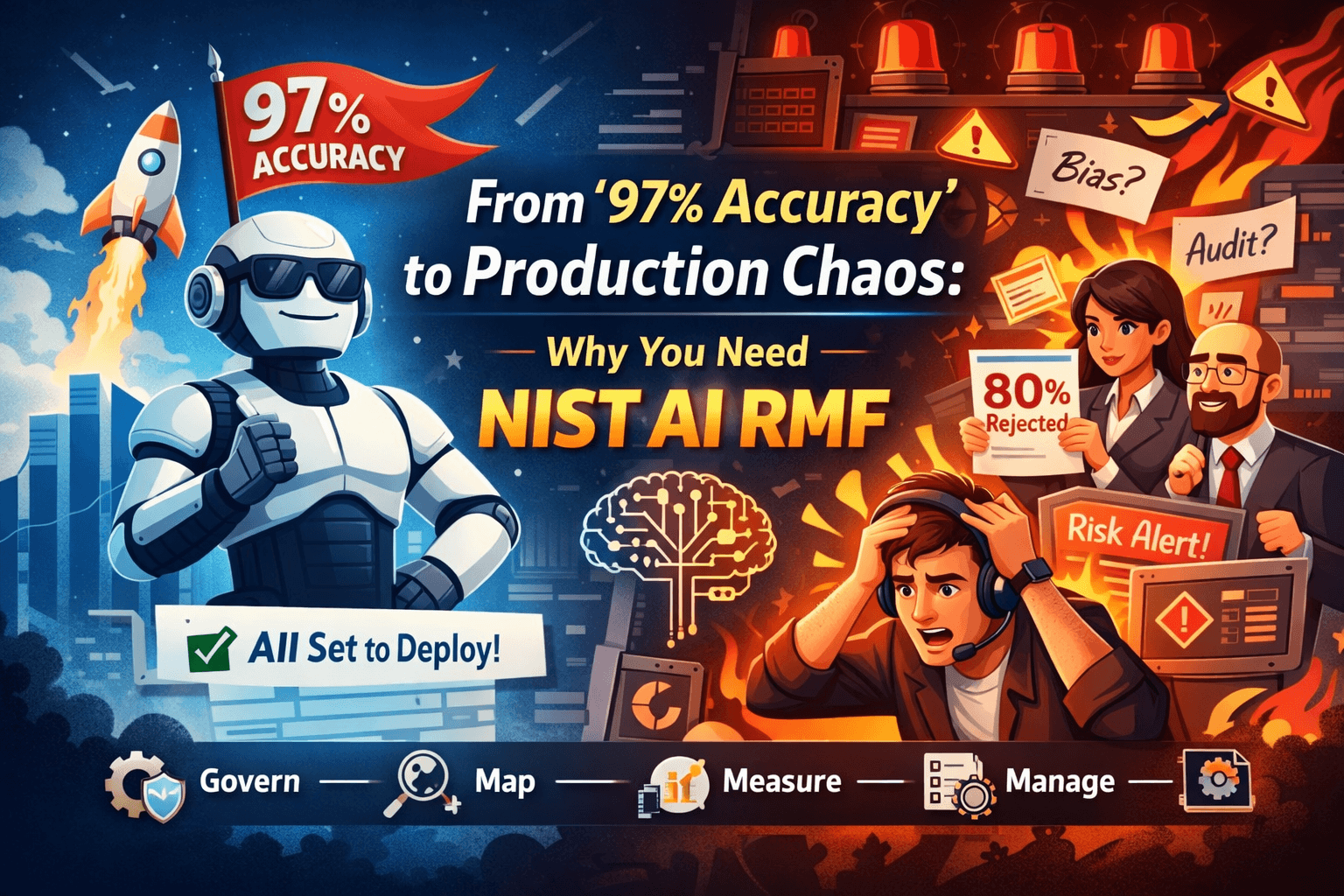

From “97% Accuracy” to Production Chaos: Why You Need NIST AI RMF

Tech Enthusiast | 19+ Years in IT | Security, Coding, Trends With over 19 years of experience in the ever-evolving world of Information Technology, I’m passionate about staying ahead of the curve. From mastering secure coding practices to exploring the latest trends in AI, cloud computing, and cybersecurity, my mission is to share valuable insights, practical tips, and the latest industry updates. Whether it's about writing cleaner, more efficient code or enhancing security protocols, I aim to empower developers and IT professionals to excel in their careers while keeping pace with the rapidly changing tech landscape.

Imagine this…

You’ve just trained a model. Accuracy: 97%. Confidence: 100%. You deploy it.

Day 1 in production:

Business team is confused Customers are impacted Compliance team is alarmed

Suddenly, your “intelligent system” becomes a risk amplifier.

What went wrong?

Not just the model. 👉 The missing piece was AI Risk Management.

This is exactly where the National Institute of Standards and Technology AI Risk Management Framework (AI RMF) comes in.

🧠 What is NIST AI RMF?

The NIST AI RMF is a practical, voluntary framework designed to help organizations build trustworthy AI systems.

It focuses on ensuring AI is not just accurate, but:

Safe Fair Transparent Secure Accountable

In short: 👉 It helps you move from “Can we build it?” to “Should we deploy it responsibly?”

🔥 The Real Problem: Accuracy ≠ Trust

Most AI teams focus heavily on:

Model performance Training data Optimization

But in production, the real challenges are:

Bias in real-world data Unexpected user behavior Lack of explainability Regulatory and compliance risks

That’s why high accuracy in testing often fails in reality.

👉 Because production ≠ lab environment

🧘♂️ The 4 Pillars of NIST AI RMF (Explained Simply)

Think of AI RMF as a calm coach guiding your AI journey:

- 🧠 Govern — “Who is responsible?”

Before building anything:

Define ownership of AI systems Establish policies and guardrails Set risk tolerance

📌 Example: Who is accountable if your AI denies a legitimate loan?

2. 🗺️ Map — “Where can things go wrong?”

Understand:

Use cases Stakeholders Impact scenarios

📌 Example: Your loan model may unintentionally disadvantage certain groups.

3.📏 Measure — “Can we detect the risk?”

Evaluate:

Bias Accuracy across segments Explainability Robustness

📌 Example: Does your model perform equally well for all demographics?

4.⚙️ Manage — “What will we do about it?”

Act on risks:

Mitigate issues Monitor continuously Improve over time

📌 Example: Set alerts if rejection rates suddenly spike.

💡 Real-World Scenario

Let’s revisit our “97% accuracy” model.

Without AI RMF: Model works in testing Fails in production Causes business and compliance issues With AI RMF: Risks identified early Bias tested before deployment Monitoring in place Clear accountability

👉 Result: Trustworthy AI, not just smart AI

⚠️ Why This Matters More Than Ever

AI is no longer experimental. It’s:

Making financial decisions Powering healthcare systems Driving customer experiences

A single failure can impact:

Customers Brand reputation Regulatory standing

👉 AI risk is business risk

🎯 Practical Tips for Teams

If you’re an engineer, architect, or leader:

Start small: Add a risk checklist before deployment Include explainability reviews Monitor real-world performance Think beyond code: Involve compliance and business teams early Document decisions Define accountability Build habits: Continuous monitoring > one-time validation Responsible AI > fast AI 🚀 Final Thought

AI success is not defined by: 👉 How accurate your model is

It is defined by: 👉 How much your users trust it

💬 Remember:

“A powerful AI without governance is just an expensive mistake waiting to happen.”

And with frameworks like NIST AI RMF… 👉 You don’t just build AI systems 👉 You build responsible, reliable, and trusted AI