🎙️ When AI Learned to Listen: The Story of Voice Agents

Tech Enthusiast | 19+ Years in IT | Security, Coding, Trends With over 19 years of experience in the ever-evolving world of Information Technology, I’m passionate about staying ahead of the curve. From mastering secure coding practices to exploring the latest trends in AI, cloud computing, and cybersecurity, my mission is to share valuable insights, practical tips, and the latest industry updates. Whether it's about writing cleaner, more efficient code or enhancing security protocols, I aim to empower developers and IT professionals to excel in their careers while keeping pace with the rapidly changing tech landscape.

1. The Silence Before the Voice

There was a time when technology only listened with its eyes.

It read our keystrokes, our clicks, and our taps — but it never truly heard us.

We spent years typing into boxes and waiting for screens to reply.

The relationship between humans and machines was efficient — but distant.

Then one day, something changed.

A quiet revolution began in the labs of speech recognition researchers —

machines started learning how to listen.

2. The First Words

It began awkwardly, like a child learning to speak.

“Hello Siri.”

“I didn’t quite catch that.”

Voice assistants were novel, but shallow —

they could recognize words, not meaning.

They followed commands, not conversations.

But in the background, AI was evolving.

As large language models grew smarter, and as Text-to-Speech (TTS) and Speech-to-Text (STT) models became more expressive,

a new kind of intelligence was emerging —

one that could listen, think, and speak almost like us.

And thus, Voice Agents were born.

3. When Machines Found Their Voice

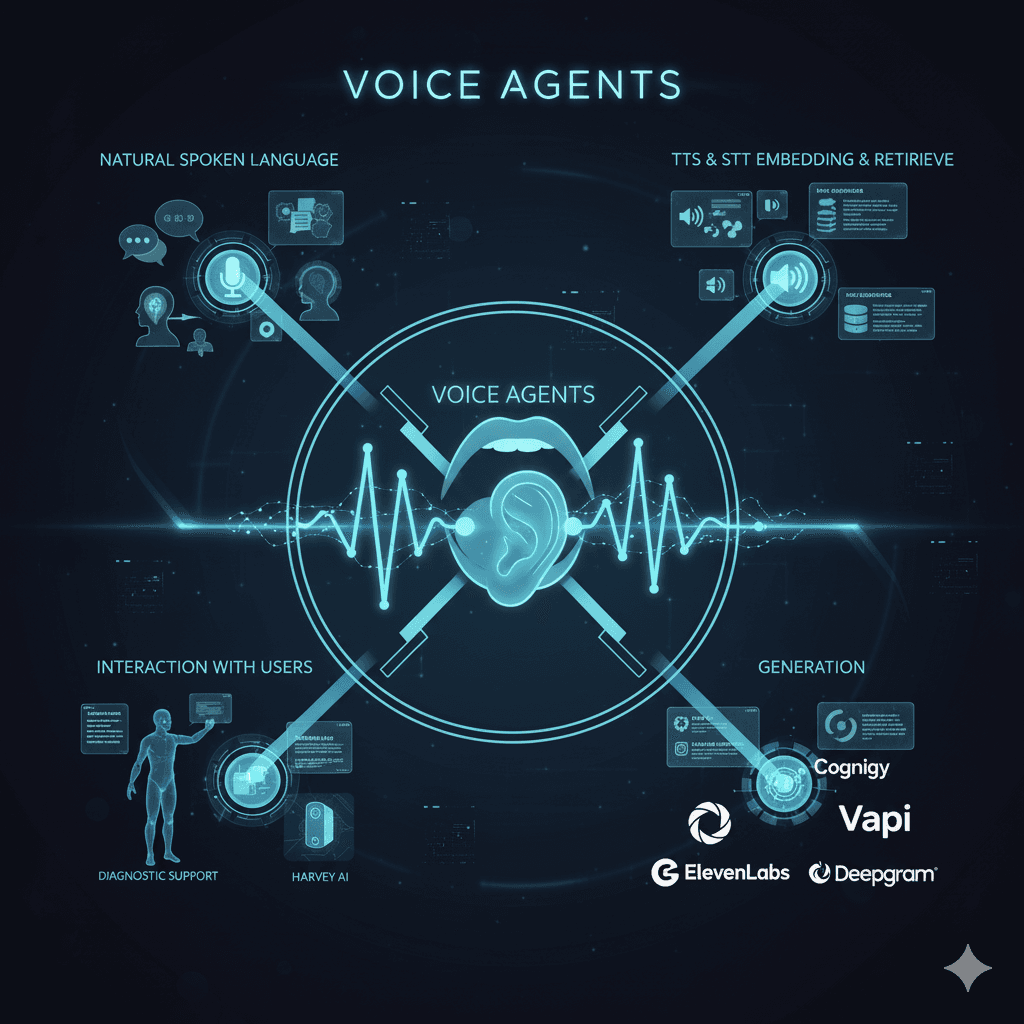

Unlike traditional bots, Voice Agents weren’t limited to text.

They could understand spoken language, reason through it, retrieve relevant data, and respond instantly — with emotion and tone.

Here’s what made them different:

They listened through Speech-to-Text (STT), converting sound into understanding.

They reasoned using embeddings and retrieval, finding meaning and context.

They spoke back using Text-to-Speech (TTS) or Streaming TTS, sounding natural, even empathetic.

Conversations with AI suddenly became… human.

4. The New Voices of Innovation

Across industries, these intelligent voices began to appear everywhere:

🏥 In hospitals, voice agents listened to doctors dictate patient notes,

transcribed with perfect accuracy, and even reminded patients about medication.

🏦 In banks, they answered customer queries in real time,

explaining products in plain, friendly language.

🏢 In enterprises, they joined customer support teams,

handling thousands of calls without ever losing patience.

🎓 In classrooms, they gave voice to learning,

helping children and visually impaired students understand complex topics.

Technology wasn’t just responding anymore — it was connecting.

5. The Pioneers Behind the Voices

A few visionaries are leading this transformation:

🎧 ElevenLabs — creating emotionally rich voices that sound real, not robotic.

🤖 Cognigy — empowering enterprises with conversational voice-first platforms.

☎️ Vapi — enabling developers to build custom voice AI agents through simple APIs.

🗣️ Deepgram — bringing real-time, high-accuracy speech recognition to scale.

Each of them adds a new tone, rhythm, and soul to the world of spoken AI.

6. The Symphony of Intelligence

Here’s what happens behind that smooth conversation you have with a Voice Agent:

🎙️ You speak — and AI listens.

💭 It understands — not just words, but intent and emotion.

🔍 It retrieves — searching databases or APIs in real time.

🗣️ It replies — instantly, with a voice that sounds warm, confident, and alive.

It’s not just dialogue; it’s a human-AI duet.

7. The Future Speaks

Imagine calling customer care and never being put on hold.

Imagine your car understanding your tone when you’re stressed.

Imagine your enterprise dashboard talking to you, not waiting for you to click.

That’s where Voice Agents are taking us —

a world where speaking to technology feels as natural as talking to a friend.

Because the future of AI isn’t typed.

It’s spoken.

🎤 Voice Agents are where technology stops waiting for input — and starts listening.