The Curious Mind of AI: How Attention and Bias Shape Its Thinking

Tech Enthusiast | 19+ Years in IT | Security, Coding, Trends With over 19 years of experience in the ever-evolving world of Information Technology, I’m passionate about staying ahead of the curve. From mastering secure coding practices to exploring the latest trends in AI, cloud computing, and cybersecurity, my mission is to share valuable insights, practical tips, and the latest industry updates. Whether it's about writing cleaner, more efficient code or enhancing security protocols, I aim to empower developers and IT professionals to excel in their careers while keeping pace with the rapidly changing tech landscape.

Imagine a child learning to read.

At first, they look at every word on the page — slowly, carefully, sometimes losing the meaning of the whole sentence. But as they grow, they start to focus on the right words, understand tone, context, and emotion. They no longer read letter by letter — they grasp the story.

That’s exactly how Artificial Intelligence learned to understand language better — through something called the Attention Mechanism.

🌟 The Birth of Attention

Before 2017, AI models like RNNs (Recurrent Neural Networks) and LSTMs (Long Short-Term Memory networks) tried to read language the old-fashioned way — word by word.

They could understand short sentences but stumbled when the story got long. They’d forget what happened earlier, much like someone remembering the end of a movie but forgetting the beginning.

Then came the groundbreaking paper titled “Attention Is All You Need.”

It changed everything.

This wasn’t just a new technique — it was a new way of thinking.

The paper introduced Transformers, the architecture behind modern AI systems like GPT, BERT, and countless others.

At its heart was one elegant idea:

Instead of remembering everything equally, what if the model could decide what to focus on?

💡 How Attention Works (Simply Told)

Imagine you’re trying to understand the sentence:

“The cat sat on the mat because it was tired.”

When you reach the word “it”, your brain naturally asks,

“Who or what is ‘it’ referring to?”

You scan the earlier words and quickly realize — it’s the cat.

That tiny act of focusing — connecting “it” to “cat” — is what the Attention Mechanism does inside an AI model.

It looks at all the words, assigns each one an importance score, and pays more attention to the words that matter most for understanding context.

It’s like shining a flashlight over a paragraph — some words glow brightly, others fade into the background.

⚙️ A Glimpse Inside the Machine

In technical terms, attention uses three key components:

Query (Q): What we’re trying to find focus for.

Key (K): What each word offers as a clue.

Value (V): The actual meaning or content carried by each word.

The model measures how similar the Query is to each Key, then uses those scores to weight the Values. The result?

A context-aware understanding of every word in a sentence.

This is how AI can now write essays, translate languages, summarize news, or even chat with you — all thanks to attention.

🤖 When Machines Mirror Us: The Emergence of Bias

But there’s another side to this story — one that’s more human than technical.

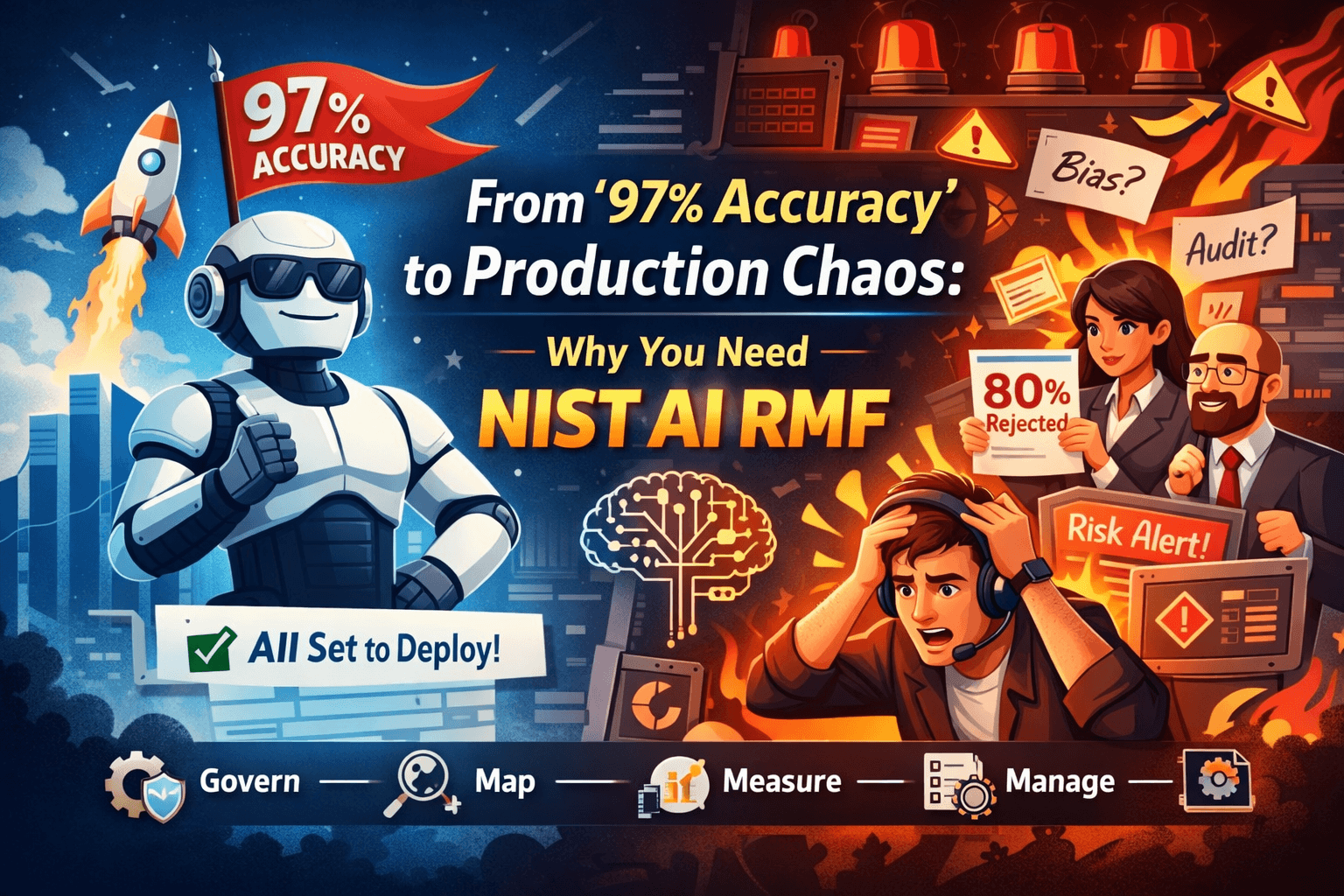

As AI became more powerful, we began to notice something unsettling.

The same brilliance that allowed it to “pay attention” also made it mirror our own biases.

After all, AI learns from our data — from texts, images, job descriptions, social media posts, and history itself. And our history, as we know, isn’t always fair or balanced.

⚠️ The Faces of Bias

Bias in AI can appear in many forms:

A hiring algorithm trained mostly on male resumes favoring men over women.

A facial recognition system misidentifying darker skin tones.

A chatbot associating certain professions or traits with specific genders or regions.

These biases don’t come from malice — they come from data.

Data that reflects our collective past decisions, stereotypes, and inequalities.

🔍 When Attention Amplifies Bias

Here’s where it gets interesting — the Attention Mechanism can actually reveal bias.

Researchers can visualize attention maps to see which words or patterns a model focuses on.

For instance, if an AI consistently pays more attention to “he” when interpreting words like “leader” or “doctor,” that’s a clue.

Attention acts like a mirror showing what the model finds important, but that reflection can expose our own societal shadows.

Sometimes, though, the same mechanism can amplify bias — by giving even more weight to already dominant patterns in the data.

🛠️ Teaching AI to Pay Fair Attention

The AI community is now working hard to make attention fairer.

Bias detection tools analyze which tokens or groups get more focus.

Debiasing techniques retrain models with balanced datasets.

Ethical AI frameworks set rules for transparency and accountability.

In a sense, we are teaching AI not just how to think, but how to think responsibly.

💬 The Moral of the Story

The Attention Mechanism gave AI the power to understand — not just to process data, but to find meaning in it.

But with that power came reflection — of all that’s brilliant and flawed in the human world.

Attention made AI more like us.

And bias reminded us that we still have much to learn — not about coding, but about ourselves.

As creators, our job isn’t just to train smarter models, but kinder ones — machines that don’t just see what’s there, but understand why it matters.

✨ In a Single Line

“Attention taught AI where to look; fairness must teach it how to see.”