“Attention Is All You Need” — How AI Learned to Understand Us

Tech Enthusiast | 19+ Years in IT | Security, Coding, Trends With over 19 years of experience in the ever-evolving world of Information Technology, I’m passionate about staying ahead of the curve. From mastering secure coding practices to exploring the latest trends in AI, cloud computing, and cybersecurity, my mission is to share valuable insights, practical tips, and the latest industry updates. Whether it's about writing cleaner, more efficient code or enhancing security protocols, I aim to empower developers and IT professionals to excel in their careers while keeping pace with the rapidly changing tech landscape.

It was late evening.

Riya sat in front of her laptop, staring at lines of text and code.

Her model — a simple RNN — had just failed again.

She sighed.

“Why can’t you understand that ‘it’ refers to ‘the animal’ and not ‘the street’?”

Her model didn’t answer, of course. It just kept misinterpreting sentences, forgetting what came before.

At that moment, her mentor, Dr. Iyer, walked in.

He smiled and said,

“Still fighting with your forgetful model? Let me tell you a story about how AI learned to pay attention.”

🧩 Chapter 1: The Old Way — Word by Word

Before 2017, most AI models that processed language — like RNNs (Recurrent Neural Networks) and LSTMs (Long Short-Term Memory networks) — had one big problem:

They could only understand text sequentially, one word at a time.

Think of it like reading a novel with a tiny flashlight — you see one line, but forget what was on the previous page.

Riya remembered how her RNN worked:

It read each word.

Updated its memory.

Tried to carry forward the meaning.

But the longer the sentence got, the more it forgot.

By the time it reached the end, the beginning was a blur.

For example:

“The book that the professor who taught the class wrote was amazing.”

By the time the model saw “amazing”, it barely remembered “book.”

Riya felt that pain every day — her model just couldn’t connect the dots.

💡 Chapter 2: The Breakthrough — Attention

Dr. Iyer pulled up a paper on his laptop:

“Attention Is All You Need” (2017) — the one that changed everything.

He explained,

“Imagine you’re reading that same sentence. You don’t look at words one by one.

You read the whole thing and instantly know which words are connected.

That’s what Attention does — it helps the model focus on the right words.”

Riya leaned forward. “So it doesn’t forget?”

“Exactly,” said Dr. Iyer. “It doesn’t have to remember everything — it just looks at what’s important.”

In simple terms, Attention allows a model to:

Look at all the words in a sentence at once.

Decide which words are most relevant to understand the current one.

Combine those pieces of information smartly.

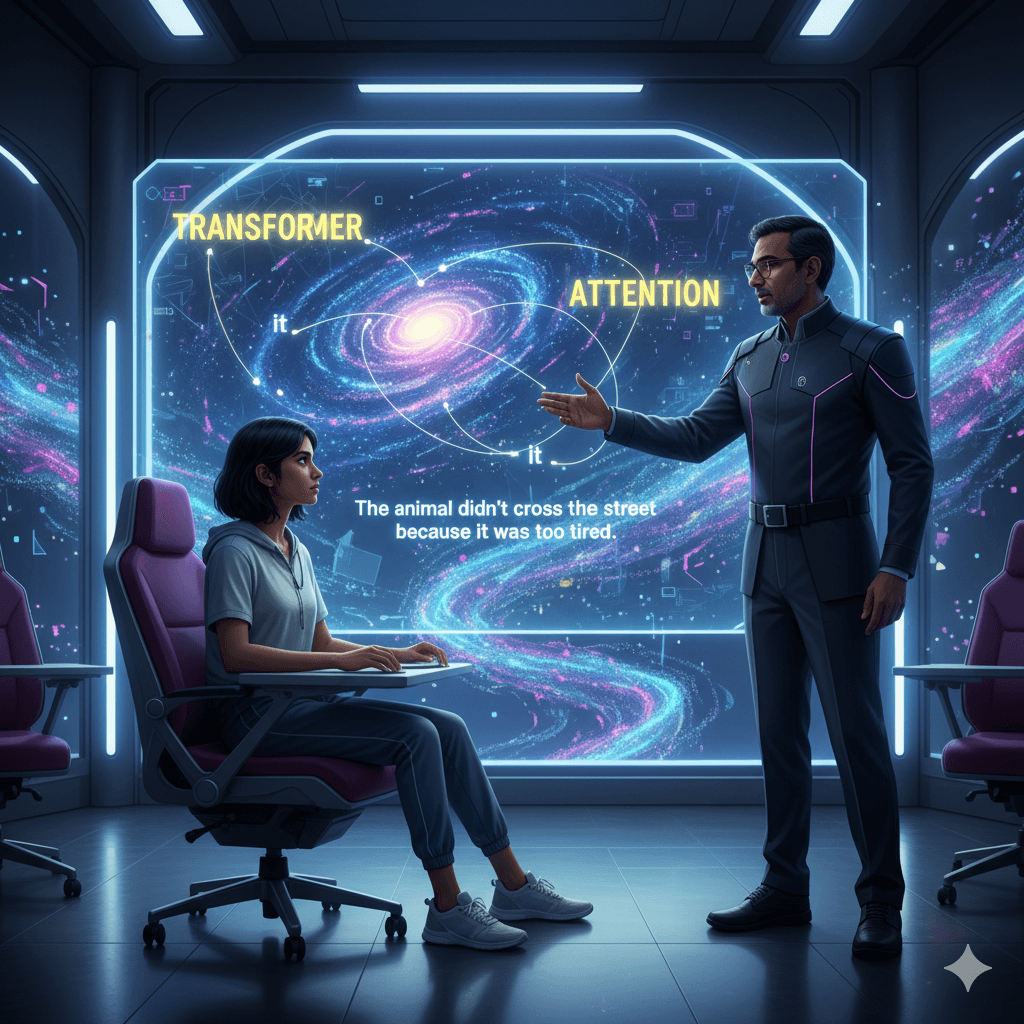

🧠 Chapter 3: The Magic of Self-Attention

Let’s break it down simply.

Every word in a sentence has three hidden roles:

Query (Q) – What am I looking for?

Key (K) – What do I have to offer?

Value (V) – What meaning do I carry?

When a model processes a sentence, every word compares its Query with every other word’s Key — like saying:

“How relevant are you to me?”

Then, based on that similarity, it picks how much of each word’s Value it should pay attention to.

For example, in the sentence:

“The animal didn’t cross the street because it was too tired.”

When processing “it,” the model looks at every other word:

“animal” → high attention

“street” → low attention

“tired” → moderate attention

And so it understands that “it” most likely refers to “animal.”

This is Self-Attention, because the model is attending to itself — to words within the same sentence.

🚀 Chapter 4: Multi-Head Attention — Many Minds Thinking Together

Riya nodded but looked puzzled again.

“So if it’s comparing every word to every other word, isn’t that too simple?”

Dr. Iyer smiled, “That’s where Multi-Head Attention comes in.”

Instead of doing this once, the model does it multiple times in parallel, each time focusing on different aspects:

One head looks at grammar (subject, verb, object).

Another head focuses on meaning.

Another on emotion or position.

It’s like having a team of experts — each one analyzing the same sentence from a different angle — and then combining their findings.

That’s why Transformers are so powerful — they understand context, relationships, and subtle meaning all at once.

🏗️ Chapter 5: The Transformer Architecture

Now, Dr. Iyer drew two big blocks on the board:

Encoder

Decoder

“These two form the brain of a Transformer,” he said.

The Encoder reads and understands the input text.

The Decoder takes that understanding and produces an output — a translation, a summary, or even new text.

Each of these blocks has multiple layers, and each layer has:

Multi-Head Self-Attention – to see all words at once.

Feed-Forward Neural Network – to refine understanding.

Normalization and Skip Connections – to keep learning stable and fast.

Unlike RNNs, Transformers don’t have to wait for one word after another.

They process all words simultaneously — which makes them fast, accurate, and scalable.

🌍 Chapter 6: The Revolution

Riya finally ran her first Transformer model.

The results stunned her.

Her model now understood long sentences, sarcasm, and even subtle context.

Words like “bank” were no longer confusing:

In “river bank,” it thought of nature.

In “credit bank,” it thought of finance.

Transformers became the foundation of almost every modern NLP model:

BERT – for understanding text.

GPT – for generating text.

T5 and BLOOM – for translation, summarization, and more.

In just a few years, Attention changed the entire landscape of AI — from chatbots and translators to creative writing tools.

💬 Chapter 7: A Lesson Beyond Technology

As the model trained, Riya smiled.

“You were right, Professor. Attention really is all we need.”

Dr. Iyer nodded.

“Yes. In machines and in life — what you focus on decides what you understand.”

That evening, Riya realized that Transformers weren’t just about algorithms.

They were about the art of focus — how even machines become smarter when they learn to pay the right kind of attention.

💭 Final Thought

The story of Transformers isn’t just about AI — it’s about how focus transforms understanding.

Whether it’s a model reading a sentence or a person living a day —

The secret lies in paying attention to what truly matters.